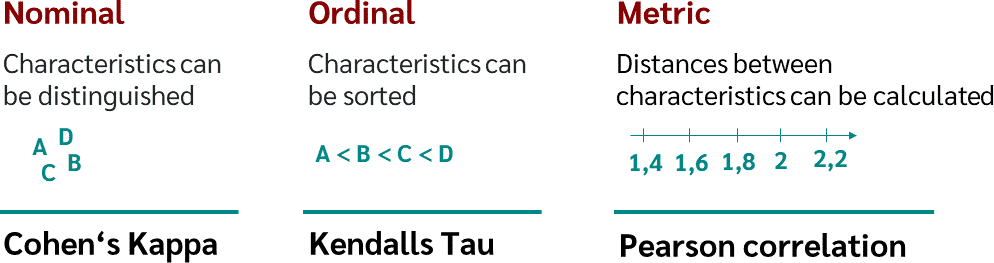

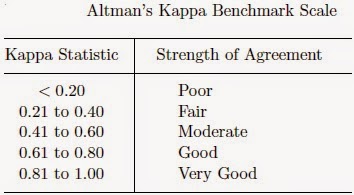

K. Gwet's Inter-Rater Reliability Blog : Benchmarking Agreement CoefficientsInter-rater reliability: Cohen kappa, Gwet AC1/AC2, Krippendorff Alpha

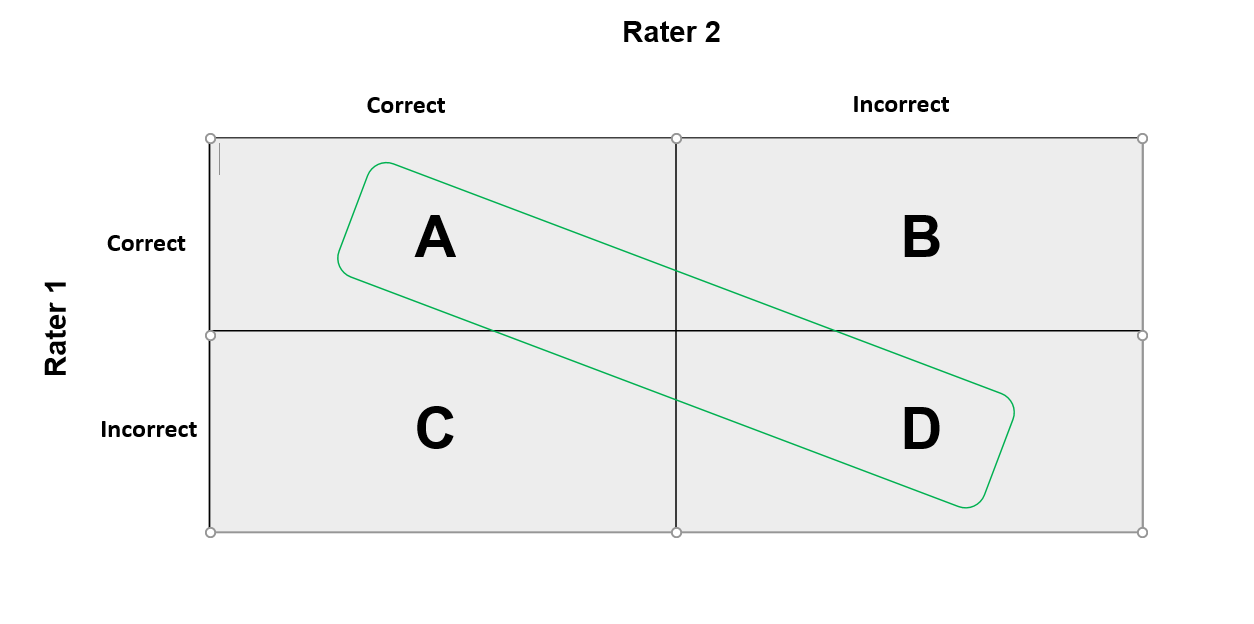

![PDF] More than Just the Kappa Coefficient: A Program to Fully Characterize Inter-Rater Reliability between Two Raters | Semantic Scholar PDF] More than Just the Kappa Coefficient: A Program to Fully Characterize Inter-Rater Reliability between Two Raters | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/79de97d630ca1ed5b1b529d107b8bb005b2a066b/1-Figure1-1.png)

PDF] More than Just the Kappa Coefficient: A Program to Fully Characterize Inter-Rater Reliability between Two Raters | Semantic Scholar

File:Comparison of rubrics for evaluating inter-rater kappa (and intra-class correlation) coefficients.png - Wikimedia Commons

![PDF] Explaining the unsuitability of the kappa coefficient in the assessment and comparison of the accuracy of thematic maps obtained by image classification | Semantic Scholar PDF] Explaining the unsuitability of the kappa coefficient in the assessment and comparison of the accuracy of thematic maps obtained by image classification | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/31589e2ee1daaf23a836cfbfe61ec52e1f249075/6-Figure1-1.png)

PDF] Explaining the unsuitability of the kappa coefficient in the assessment and comparison of the accuracy of thematic maps obtained by image classification | Semantic Scholar

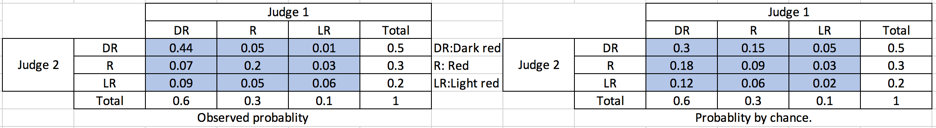

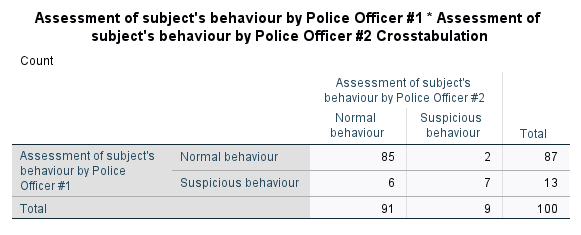

Kappa Coefficient for Dummies. How to measure the agreement between… | by Aditya Kumar | AI Graduate | Medium

GitHub - jiangqn/kappa-coefficient: A python script to compute kappa- coefficient, which is a statistical measure of inter-rater agreement.

The kappa coefficient of agreement. This equation measures the fraction... | Download Scientific Diagram

![Suggested ranges for the Kappa Coefficient [2]. | Download Table Suggested ranges for the Kappa Coefficient [2]. | Download Table](https://www.researchgate.net/publication/325603545/figure/tbl2/AS:669212804653076@1536564174670/Suggested-ranges-for-the-Kappa-Coefficient-2.png)