Safety

-

Blog

Is Reddit safe? A complete safety guide for 2025

Reddit is one of the largest online platforms in the world, where millions of users come together daily to share stories, ask questions, post updates, and exchange opinions on virtually every topic imaginable. In general, Reddit is a legitimate site with strong rules, and it’s supported by a large and active user base that helps report and remove harmful or misleading…

Read More » -

Blog

Baby Bath Safety: What Parents Should Know

The most current data shows more than 43,000 children under the age of 18 are treated for injuries occurring in the bath or shower. Children 4 years old or younger, including infants, are at a higher risk of these incidents, accounting for over half of injuries. The most common cause of injury was a slip, trip, or fall, but in…

Read More » -

Blog

Safety and Security Products for Airbnb and Hotel Stays

Call me paranoid, but incidents of nonworking monitors, hidden cameras, and the like aren’t unheard of. As recently as 2024, a family in Florida told a harrowing story of having to use the balcony to exit an Airbnb after alarms didn’t go off during a fire. CNN published an investigation into a short-term rental company’s lack of appropriate response when vacationers…

Read More » -

Blog

Why Should We Save the Consumer Product Safety Commission?

A government agency responsible for protecting Americans from unsafe products now needs protection itself. The Consumer Product Safety Commission (CPSC) has oversight of more than 15,000 categories of products whose safety we tend to take for granted—including things like hair dryers, power adapters, and candles, all of which have been the subject of recent recalls by the agency. The CPSC…

Read More » -

Blog

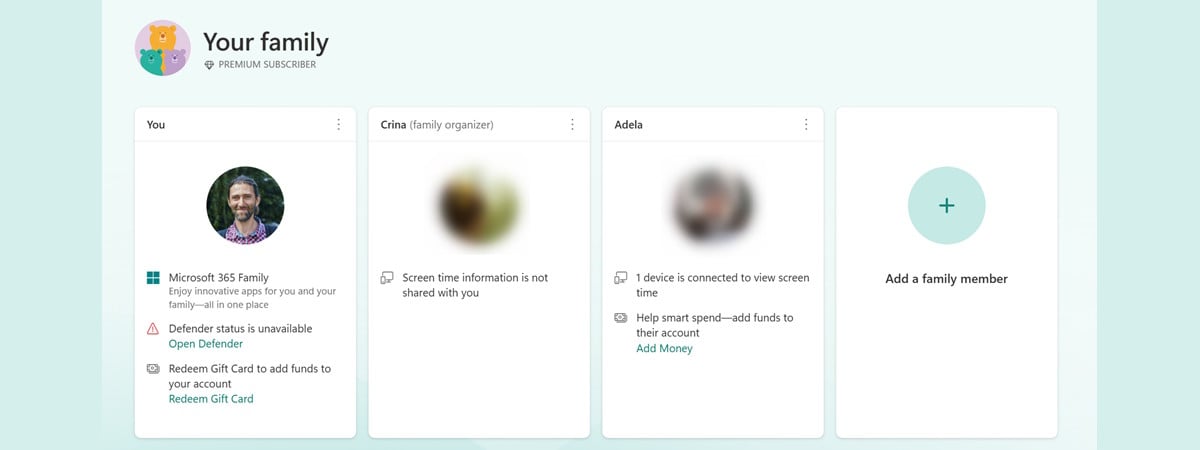

How to use Windows Family Safety to manage your child’s PC time and activity

Windows 11 and Windows 10 include a set of parental control tools designed to help you guide and manage your child’s digital habits. These tools are part of Microsoft’s Family Safety service and let you set daily screen time limits, restrict or block specific apps, and receive detailed reports about your child’s PC usage. Everything is tied to your Microsoft…

Read More » -

Blog

Safety 1st Car Seat Recall: Choking Hazard on Headrest

The company will send a replacement headrest pad assembly kit to affected owners, but in the meantime, you can still use your Safety 1st Grow and Go all-in-one car seat until the replacement kit arrives, the company says. Just be sure to keep an eye on the foam headrest pad and check that your child isn’t able to pick off…

Read More » -

Blog

Best Luxury Crossovers With Advanced Safety Features in 2025

The luxury segment represents the automotive industry’s most advanced vehicles, especially when it comes to technology. In a fast-moving world, driver assists and safety features in crossovers are much more impressive than they used to be, capable of taking control and mitigating accidents. There are a number of luxury automakers that have invested a ton of money and research into…

Read More » -

Blog

Top 10 2025 Crossovers With the Most Advanced Safety Features

Technology in cars is becoming incredibly advanced, with driver assists and safety features making vehicles safer than ever before. However, not everyone is on an equal playing field and there are certain crossovers that have more advanced safety features than their rivals. All automotive technology has advanced at an insane rate in the last ten years, with driver assists being…

Read More » -

Blog

EU AI Rules Delay Tech Rollouts, But Civil Societies Says Safety Comes First

Tech companies are adamant that the regulation of artificial intelligence in the E.U. is preventing its citizens from accessing the latest and greatest products. However, a number of civil society groups feel otherwise, maintaining that AI developers need to produce products that uphold their customers’ safety and privacy. Some of tech giants’ delayed launches in EU There have been a…

Read More » -

Blog

Bike Riding Safety Tips for New and Experienced Riders

Keep in mind that safety doesn’t just mean protective equipment; it’s also about how cyclists interact with motorists, pedestrians, and other cyclists. Obey the law. The League of American Bicyclists reminds riders, “You have the same rights and responsibilities as drivers.” This means that cyclists have to follow all traffic laws and obey street signs, signals, and road markings. Ride…

Read More »