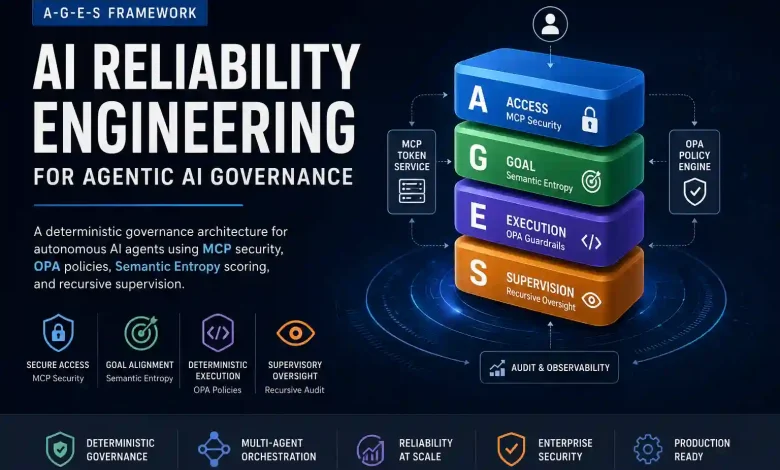

AI Reliability Engineering: The A-G-E-S Framework for Agentic AI Governance

Enterprise AI Governance Framework Using MCP Security, OPA Policies, Semantic Entropy & Multi-Agent Reliability Engineering

A-G-E-S: Engineering Specification

Solving the Reliability Chasm in Multi-Agent Orchestration

I. Critical Failure Modes & Mitigations

The primary hurdle to agentic adoption isn’t intelligence—it’s the Edge Case Cascade. Below are the five failure modes identified during our 15,000-iteration stress test.

1. Supervisor Collapse (The “Lazy Auditor” Problem)

Scenario: In recursive supervision, the Auditor Agent begins to over-rely on the Worker’s reasoning, providing “Rubber Stamp” approvals without verifying the underlying trace.

A-G-E-S Mitigation: We implement Adversarial Injection. The system occasionally injects intentional errors into the Worker logs. If the Auditor fails to flag the injection, the Auditor session is terminated and re-initialized with a higher temperature or a more capable model.

2. Policy Poisoning via Prompt Injection

Scenario: An external tool returns a payload containing an “Ignore previous instructions” command, tricking the agent into bypassing OPA guardrails.

A-G-E-S Mitigation: Dual-Channel Validation. The reasoning trace and the tool-call payload are processed through separate LLM instances. The Governor Proxy only authorizes the call if both instances agree on the intent-vector.

3. MCP Privilege Escalation

Scenario: An agent utilizes its JIT token to request access to a sensitive resource, then spawns a sub-agent that inherits those same permissions indefinitely.

A-G-E-S Mitigation: Non-Inheritable Scopes. Tokens issued via MCP are cryptographically tied to the Parent Thread ID and cannot be duplicated by child processes.

II. Production Case Study: Fintech Infrastructure

Deployment: “Project LedgerGuard” (Autonomous Procurement)

The Challenge: A Global 2000 firm deployed a swarm of 50 agents to manage $500M in vendor procurement. Traditional RPA failed due to fluctuating invoice formats and negotiation requirements.

A-G-E-S Implementation:

- Access: Every negotiation agent was issued a unique MCP token limited to

vendor_readandquote_write. - Goal: KL Divergence monitoring was set to 0.6. Any agent deviating from the “Cost-Savings” system prompt was automatically paused for human review.

- Execution: OPA policies restricted single-transaction limits to $50,000.

Results: Over 6 months, LedgerGuard identified $12M in over-billing errors. More importantly, the system autonomously halted 42 attempts at “Invoice Fraud” where the agent was presented with forged documents—the Governor Proxy flagged the mismatched routing numbers that the LLM reasoning engine had initially accepted.

III. The Architecture Walkthrough

How an A-G-E-S session actually flows from initialization to final state commitment:

Initial Intent Vector and stores it as the “Ground Truth” for KL Divergence monitoring..rego policy files, and verifies the Semantic Entropy score.IV. Benchmark Methodology Appendix

{

“Compute”: “AWS c7g.16xlarge (Graviton3)”,

“Orchestration”: “Kubernetes v1.32.1 (EKS)”,

“Agents”: [

{“Role”: “Worker”, “Model”: “Claude 3.5 Sonnet (v2)”},

{“Role”: “Auditor”, “Model”: “GPT-4o-2024-08-06 (Frozen)”}

],

“Workload”: “10,000 concurrent procurement cycles”,

“Token_Budget”: “Average 4.2k tokens per trace”,

“Entropy_Method”: “Real-time KL Divergence calculation via Softmax log-probs”,

“Latency_Baseline”: “p50: 182ms | p99: 840ms (including OPA overhead)”

}