Claude vs ChatGPT vs Gemini vs Grok

Why most production AI systems fail because of state management, prompt decay, tool instability, and orchestration complexity — not raw model intelligence.

Claude vs ChatGPT vs Gemini vs Grok [2026]: The Prompt Entropy Problem

Most production AI failures have nothing to do with intelligence.

The models are usually smart enough.

The real problem is state management. Tool calls break. Prompts mutate. Context degrades. Retrieval drifts. Eventually, nobody can debug the agent anymore.

Most AI infrastructure problems are not model problems. They’re state-management problems disguised as prompting problems.

Most teams still evaluate AI models like benchmark spreadsheets are the product. In production, the benchmark usually stops mattering after the second month. The real issue is what happens after hours of tool calls, retries, schema drift, and accumulating prompt patches.

Here is what the landscape actually looks like when you try to keep these systems alive in production.

What Nobody Admits About AI Infrastructure

Most enterprise AI systems are held together by prompts nobody wants to edit anymore.

By month six, most enterprise prompts stop looking like software specifications. They look like geological layers. Emergency patch on top of emergency patch. Nobody remembers what the bottom layers were originally trying to solve.

The system accumulates defensive instructions forever because the engineering team is terrified to touch the orchestration layer. One production prompt we inherited literally contained this line:

"If the user mentions refunds AND attachments exist, summarize the thread before calling the escalation tool unless the ticket originated from Stripe."

Nobody on the team remembered why that rule existed, but removing it caused an unrelated accounting tool call to fail. We watched that single agent accumulate a 14,000-token system prompt after four months of incremental patches.

That is the part of AI deployment benchmark charts never measure. Every long-running AI system eventually becomes a prompt sedimentary rock problem.

The Generic Wrapper Trap

A frequent mistake is trying to build a completely model-agnostic layer. Teams get terrified of vendor lock-in, so they write an abstraction to hot-swap ChatGPT for Claude tomorrow.

In theory, this is best practice. In reality, you end up sacrificing the exact features that made the models useful in the first place. You lose OpenAI’s strict schema enforcement. You lose Anthropic’s native computer-use APIs.

You are left with a fragile system that breaks the second a model decides to output a markdown block instead of raw text.

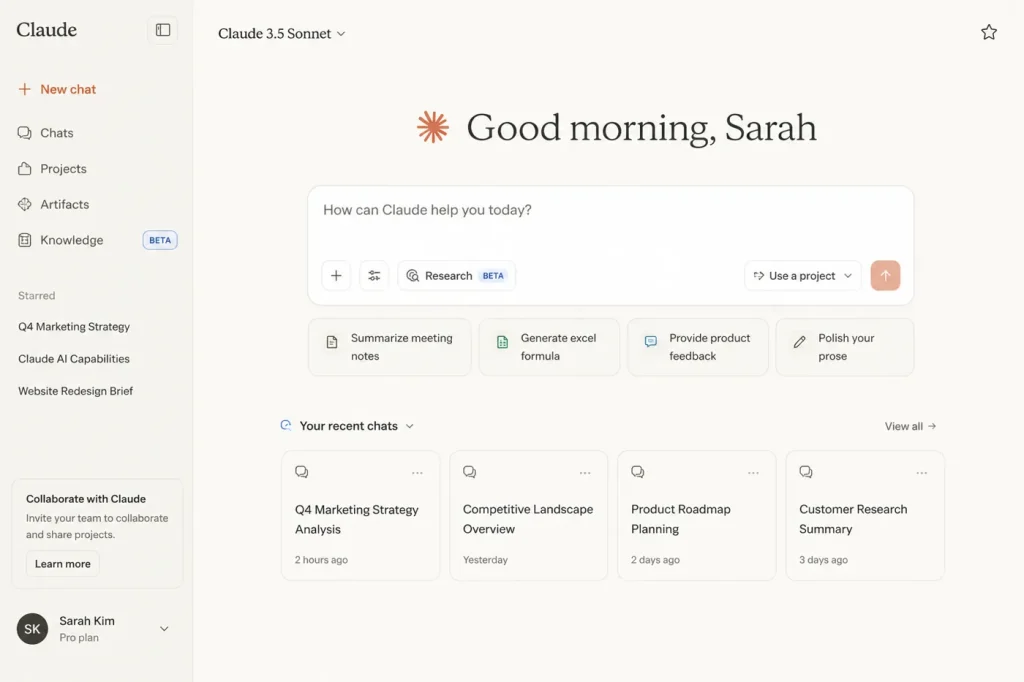

Claude: The Agentic Standard

Among teams building agentic developer tooling, Claude Sonnet currently appears to have the strongest momentum—mostly because of its long-context steerability and reliable tool behavior.

When executing multi-step logic, Claude is simply less likely to guess a parameter when it gets confused. It tends to halt and surface an error rather than inventing a hallucinated JSON object just to keep the workflow moving.

But it’s not perfect. Claude is highly sensitive to formatting changes. If your team starts stacking messy, unformatted instructions inside the context window, instruction drift accelerates faster than expected.

The Production Scar: We moved a multi-agent coding workflow to Claude because of its reasoning depth when navigating repositories using Cursor vs Windsurf vs Claude Code. But under heavy concurrency spikes, Claude’s API hit strict rate limits much faster than OpenAI’s infrastructure. We spent four days building fallback queues just to handle transient 429 errors we weren’t expecting.

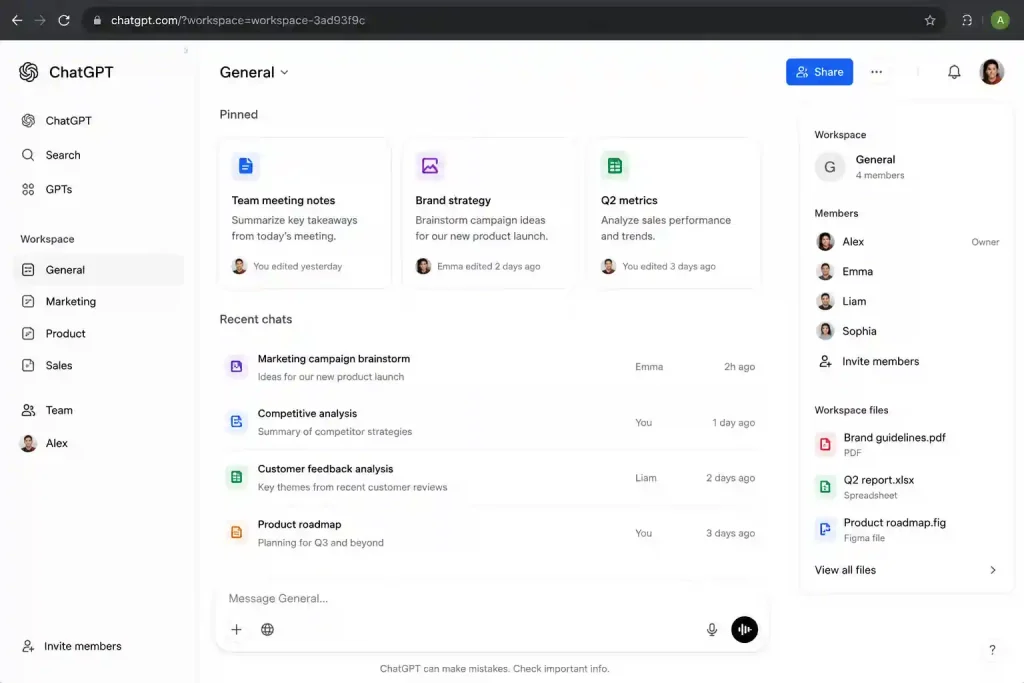

ChatGPT (OpenAI): The Boring Reliable Layer

OpenAI is still the default for most companies, but the way we use it has shifted. It has essentially become the boring reliable layer of the industry.

Half of prompt engineering is just compensating for bad product design. If you need a model to return structured data, writing paragraphs begging it to “only return valid JSON” is a losing battle.

OpenAI wins here because of its schema-native inference. It guarantees the output will match your exact JSON schema, handling the formatting at the engine level so you don’t have to waste prompt space on it.

The Production Scar: Last year, one extraction pipeline silently corrupted 11,000 rows because an open-weight model inserted trailing commas into nested JSON. Nobody noticed until downstream analytics started failing three days later. Moving that specific ingestion step back to OpenAI’s structured outputs dropped our validation failures to zero. If you are debating the structural infrastructure choices between these two ecosystems, look closely at how they handle strict parsing constraints in production.

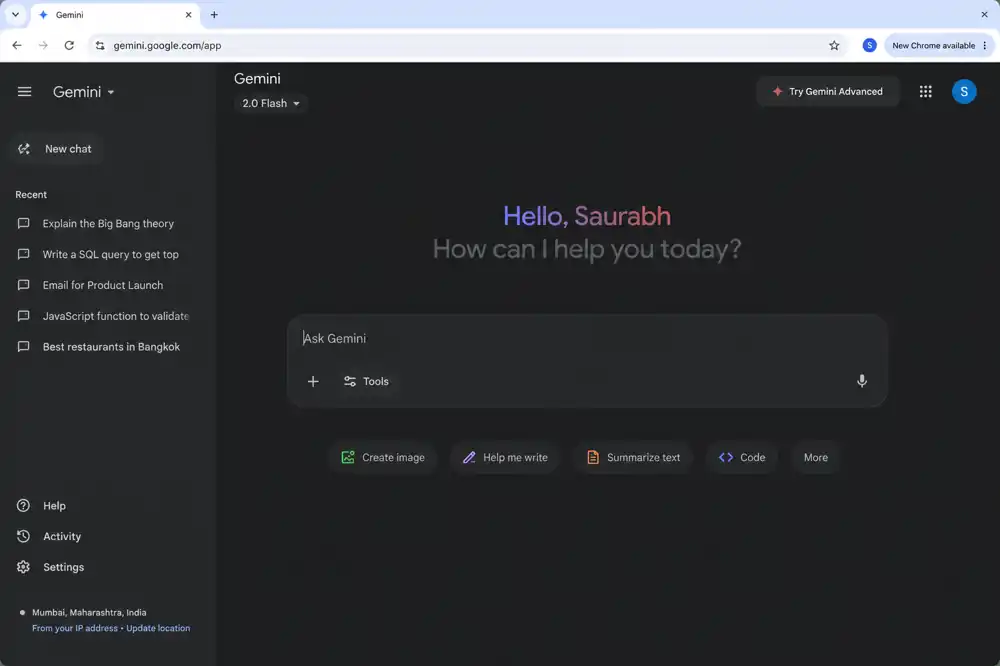

Gemini: The Multimodal Data Sink

We originally thought the massive context window was mostly marketing. It turned out to be genuinely useful for ugly internal datasets nobody wanted to chunk correctly.

Many RAG systems exist only because teams copied architecture diagrams from Twitter. If your entire repository of internal company docs fits inside 2 million tokens, you probably don’t need a complex vector database right now.

For some workloads, Gemini changes the baseline by letting you dump unchunked media—like a 45-minute raw video or hundreds of PDFs—straight into the prompt. We ended up writing a separate breakdown on where large context windows actually replace RAG infrastructure in practice.

But it’s a brutal trade-off when it comes to speed.

The Production Scar: Feeding 1.5 million tokens into Gemini avoids the engineering cost of building a vector database, but the time-to-first-token latency is high. We tried using it for an interactive user dashboard and had to scrap it. Users won’t stare at a loading spinner for 18 seconds.

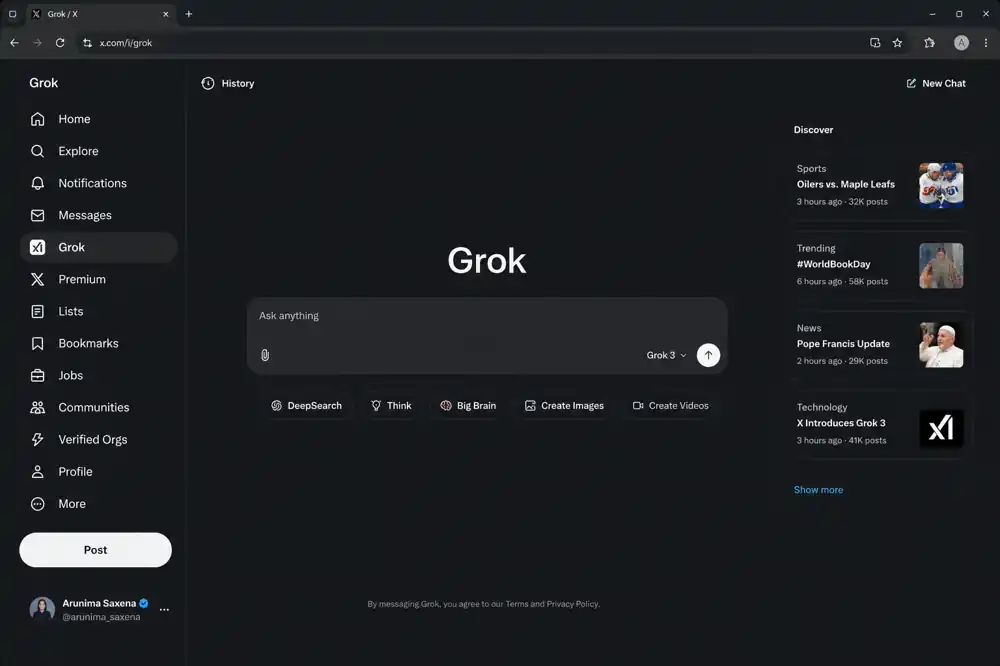

Grok: The Real-Time Niche

Grok’s primary value is direct, unmetered access to the live X (Twitter) data stream.

If you are building automated risk mitigation systems to track live PR events, or parsing market sentiment changes, Grok gives you a data layer other vendors can’t match due to licensing restrictions.

The Production Scar: We tested Grok on internal financial reports and noticed a distinct operational limitation: it handled live event context significantly better than static documentation. It was unusually good at tracking narrative shifts in public conversations, but noticeably weaker at dense, retrieval-heavy workflows. Attempts to run standard enterprise documentation RAG pipelines through it resulted in uneven document recall and classic AI hallucinations.

The Baseline Matrix

| Model | Where it usually makes sense | Where it usually breaks |

| Claude (Sonnet) | Autonomous agents, IDE integration, complex workflows. | Sudden load spikes; prompt complexity accumulates faster than expected. |

| ChatGPT (OpenAI) | Strict JSON extraction, high-volume conversational interfaces. | Deep, multi-turn reasoning chains compared to Anthropic. |

| Gemini (Pro) | Processing unchunked video, audio, or massive document dumps. | Interactive user workflows requiring sub-second response times. |

| Grok | Real-time social sentiment, live public crisis tracking. | Complex internal pipelines; dry corporate data. |

The 6-Month Reality Check

Most enterprise AI demos never survive contact with real users. When you move from a local prototype to production, the things that break usually aren’t the things you tested for.

At first, we assumed retrieval quality was the issue. It wasn’t. The real issue was that the agent had stopped respecting earlier tool constraints after the prompt grew past a certain size.

-

Overbuilding Early: Teams frequently deploy complex hybrid vector search for 50MB of internal PDFs. Don’t build infrastructure you don’t need yet.

-

Version Deprecation: Cloud providers retire model versions. When you are forced to upgrade, the new model’s baseline behaviors change slightly. If you don’t have an automated testing suite to catch the structural regressions that explain why AI agents fail, your application will break in unpredictable ways.

-

Observability Debt: When an agent loops or fails in production, and you only have basic API latency logs, debugging degenerates into guessing. You need state tracing from day one.

The Real Bottleneck

The industry still talks about AI as if intelligence is the bottleneck. In production, intelligence is usually the easy part.

The hard part is building systems that remain stable while the prompts grow, the models change, the tools evolve, and the edge cases accumulate.

Stable AI systems are going to matter more than impressive demos.

Most companies are still optimizing for demos. The ones that win will optimize for systems that are still understandable a year later.