RAG Explained: Why Retrieval Quality Wins Over AI Model Size

Most AI hallucinations are not model failures - they are retrieval failures caused by messy data, weak metadata, and broken search architecture.

PHASE 2: STRATEGIC PRE-FLIGHT REPORT

-

Dominant Search Intent: Strategic ROI and Accuracy. The reader wants to know why “smart” AI models fail on private data and how to fix the accuracy bottleneck.

-

Hidden Reader Anxiety: “I’m paying for the most expensive AI models, but they still make mistakes on my data. Is AI just a hype cycle, or is my data the problem?”

-

Canonical Framing Goal: The Open-Book Exam Paradox. A PhD student (Large Model) will still fail an exam if the textbook they are given (Retrieval) is missing pages or has a corrupted index.

-

One Asymmetric Insight: RAG accuracy is an Information Retrieval (IR) problem masquerading as an AI problem. High-fidelity AI in 2026 is a data engineering cost, not an inference cost.

-

One Contrarian Truth: Most enterprise RAG projects are just “Broken Search Engines” with an expensive chat interface.

-

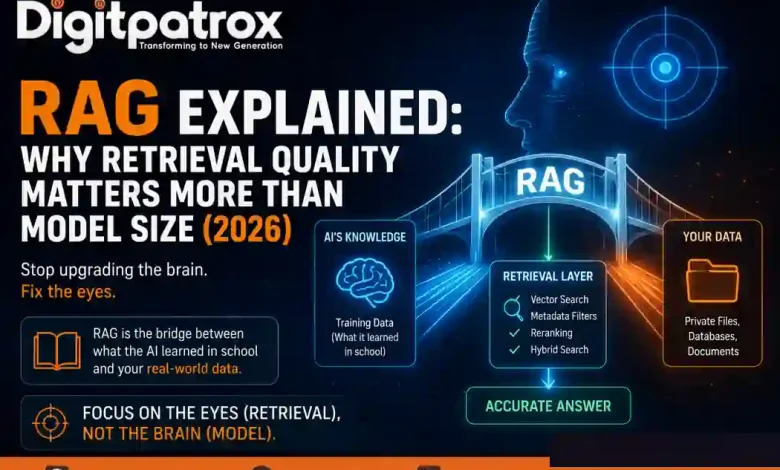

Simplest Mental Model: RAG is the “bridge” between what the AI learned in school (training data) and the specific files in its briefcase (your data).

-

One Sticky Phrase: “Focus on the eyes (retrieval), not the brain (model).”

-

Real Operational Failure Pattern: The Context Poisoning Trap. A fintech support team indexed three years of Slack archives and legacy PDFs into a single Pinecone namespace. The AI started quoting deprecated 2023 refund policies because they semantically matched “cancellation” better than the 2026 SOP. The model wasn’t hallucinating; it was retrieving operational garbage.

PHASE 3: EXECUTIVE SUMMARY (5 BULLETS)

-

The RAG Core: Retrieval-Augmented Generation (RAG) is the architecture that allows an AI to “look” at your specific, private files before answering a question.

-

Retrieval > Intelligence: A smaller, faster model (like Llama 3 8B) with perfect retrieval consistently outperforms a massive model (GPT-4o) fed with messy, unindexed data.

-

The Latency-Accuracy Trade-off: High accuracy requires “Reranking” and “Hybrid Search.” These add milliseconds to response time but prevent million-dollar operational errors.

-

The 1M Token Myth: Large context windows are not a replacement for RAG. Without retrieval architecture, “context dilution” causes models to miss facts even if they are in the prompt.

-

Tactical Bottom Line: If your AI is inaccurate, do not upgrade the model. Audit your Vector SEO and data chunking to fix the information architecture first.

PHASE 4: WORDPRESS-READY ARTICLE

RAG Explained: Why Retrieval Quality Matters More Than Model Size

Retrieval-Augmented Generation (RAG) is the technical bridge that connects a general AI model to your private, real-time data. In 2026, the competitive advantage has shifted: it is no longer about who has the biggest “brain” (the model), but who has the best “eyes” (the retrieval window). If your AI is hallucinating on your private data, upgrading your model is an expensive mistake. You don’t have an intelligence problem; you have an information architecture problem.

What is RAG? (The PhD with a Missing Textbook)

RAG (Retrieval-Augmented Generation) is an architectural pattern where an AI model searches a specific, private database for relevant facts before answering a prompt. This process ensures the AI is “grounded” in your specific data rather than relying on its outdated training knowledge, effectively eliminating the “Knowledge Cutoff” problem for enterprise applications.

Think of it as the Open-Book Exam Paradox. You can hire a student with a PhD (a massive model like GPT-4o). They are incredibly smart. But if the textbook you give them for the exam is missing pages, contains outdated info, or is indexed poorly, even that PhD will fail the test.

Most companies spend their entire budget trying to “hire a smarter student” when they should be fixing the textbook.

The Modern RAG Architecture Map

To understand why systems fail, you have to look at the “plumbing.” Here is the layered RAG pipeline used in 2026:

| Layer | Purpose | Common Failure Pattern |

| Embedding Model | Converts meaning into vectors | Weak semantic encoding |

| Vector DB | Retrieves similar content | High retrieval “noise” / No namespaces |

| Metadata Filters | Removes outdated docs | Missing “freshness” rules (Date/Status) |

| Reranker | Validates relevance | Assuming the first search result is right |

| LLM Generation | Writes the final answer | Hallucinated synthesis from bad data |

Most Enterprise RAG Projects Are Just “Broken Search Engines”

The success of a RAG system depends almost entirely on its retrieval accuracy—specifically how well it handles semantic search, reranking, and metadata filtering. If your system cannot distinguish between a 2023 “Draft” and a 2026 “Final” document, your AI will confidently quote the wrong information, regardless of the model’s parameter count.

Here’s where this gets messy. I’ve seen pilots become unusable because a team embedded three years of Slack archives and ticket histories without rules. The retrieval layer begins surfacing semantically adjacent but operationally irrelevant context.

You aren’t fighting a hallucination; you are fighting a search ranking failure. If your Vector SEO is weak, your AI is essentially a high-speed search engine that likes to make things up when it gets confused.

The Latency vs. Accuracy Trade-off

Building production-grade AI requires choosing your “scars.” You cannot have instant responses and 100% accuracy simultaneously.

-

Aggressive Reranking: Adds ~200ms of latency but can improve accuracy by 30% by double-checking the search engine’s work.

-

Hybrid Search: Combines Vector Search (meaning) with Keyword Search (exact matches). This is mandatory if your AI needs to find exact Policy IDs or legal clauses.

-

Small Chunking: Prevents “Context Drowning” by giving the AI 200-word snippets instead of 50-page chapters.

Mini Failure Scenario: The Refund Disaster

A customer support team indexed three years of Zendesk tickets, Slack archives, and legacy refund PDFs into a single Pinecone namespace.

A customer asked about a cancellation. The retrieval layer surfaced a deprecated 2023 refund policy because it “semantically matched” the query better than the 2026 SOP. The AI answered confidently. The support agent followed the AI’s lead. Finance eventually had to reverse 200 incorrect refunds manually.

The Lesson: The AI wasn’t hallucinating. The retrieval stack failed because it lacked a metadata “freshness” filter.

Why 1M Token Context Windows Don’t Eliminate RAG

Large context windows (available in models like Gemini 1.5 or Claude 3.5) reduce retrieval pressure but create a new problem: context dilution. When too many documents enter a prompt simultaneously, retrieval precision falls, token costs spike, and the model struggles with “Lost in the Middle” failures.

Bigger context windows are not a replacement for retrieval architecture; they are a multiplier for good retrieval systems. If you dump a 1-million-token “data dump” into an AI, the “signal-to-noise ratio” drops. The AI may find the fact but fail to prioritize it over contradictory information elsewhere in the same prompt.

In 2026, the goal is to use RAG to find the “Perfect 5,000 tokens” rather than using a massive window for “1,000,000 mediocre tokens.”

The Security & Governance Wall

As RAG becomes the backbone of the AI Operating System, it introduces Retrieval Poisoning. If an attacker injects malicious instructions into indexed documentation, the AI may retrieve and obey those instructions during generation.

Furthermore, RAG must respect Permission Inheritance. If your system doesn’t check user rights, an intern using AI for productivity could accidentally “retrieve” sensitive executive payroll data just because it’s semantically similar to a query about “salary structures.” For more, see our guide on AI Cybersecurity in 2026.

Practical Implementation Steps

If you are building or fixing a RAG system tomorrow, follow this sequence:

-

Data Sanitation: Delete duplicate docs and deprecated “Drafts” before ingestion.

-

Define Metadata: Tag every file with

status: final,department, anddate_created. -

Choose the Store: Use pgvector for SQL integration or Pinecone for massive horizontal scale.

-

Optimize Chunking: Aim for 200–400 tokens with 15% overlap to preserve context.

-

Test Retrieval First: Search your database manually. If the top 3 results don’t contain the answer, your LLM will never find it.

-

Add Reranking: Use a model like Cohere Rerank to validate the results before they hit the LLM.

Conclusion: The Era of Operational Truth

The next generation of AI winners will not be determined by who owns the largest models or the biggest context windows. They will be determined by who can retrieve the right operational truth faster, safer, and more reliably than everyone else.

If your AI pilot is failing, don’t buy a bigger brain. Fix the eyes. Move from AI agents explained as a concept to AI agents that actually save 10 hours a week because they are grounded in truth via the Model Context Protocol (MCP).

Accuracy in the AI era is an information architecture problem. Stop looking for a smarter PhD; start fixing the textbook.

FAQ Section

-

What is RAG? RAG stands for Retrieval-Augmented Generation. It allows an AI to “retrieve” specific, factual documents from your private database before “generating” a response.

-

Can RAG stop AI hallucinations? Yes, by “grounding” the AI in factual data. When a model is forced to cite its source from your database, its likelihood of making things up drops significantly.

-

Is RAG better than a 1M token context window? Yes. RAG is cheaper, faster, and prevents “context dilution,” ensuring the AI focuses only on the most relevant facts.